While designing and implementing solutions, I am often faced with the need to set up recurring batch jobs around data storage and processing. Recently I have been trying to keep my infrastructure as serverless as possible so in this article, I will show you how Google Cloud Platform can be leveraged to run almost any batch job your project might need for free.

Use Cases

For me, this batch pattern is the most useful when it comes to data processing, reconciliation, and cleanup. Here is an example involving data aggregation…

A bucket can be an effective repository for streaming data but if your payloads are small in size and frequent — having a file for every payload can get expensive if you have to do frequent reads. I solve this problem by running a batch job to merge individual payloads into hourly or daily files, allowing for much more cost effective solution.

Or how about database cleanup…

If you have a SQL database containing large timeseries data sets, regular purging is critical for performance. You can squeeze a recurring job into a web application or the ETL system that is loading data into your tables however I solve for this using this serverless batch approach to decouple the solution and simplify maintenance.

Architecture

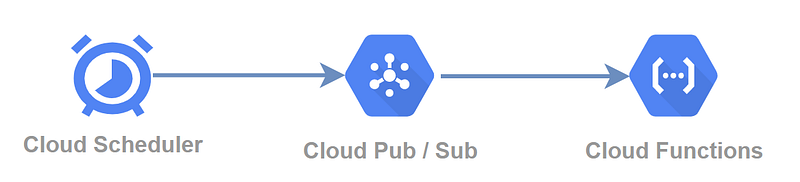

We will be using 3 GCP services to implement our serverless batch solution. The Cloud Scheduler will trigger our batch events, Pub/Sub will be used to transmit the events to a Cloud Function that will perform the required batch operation.

Pricing

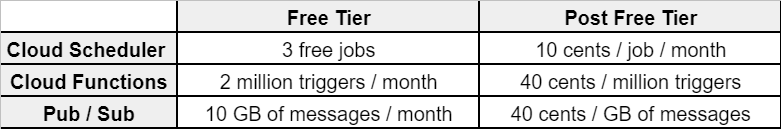

GCP offers a very generous free tier, I have made a simplified cost table below for the three services we will need to schedule and run a batch job. A batch job running every 5 minutes will use up 1 cloud scheduler job, ~9,000 Cloud Function executions, and ~9MB of Pub/Sub throughput.

If you need more than 3 jobs across your projects, you will be charged 10 cents USD per month for every additional job.

Configuration

Pub/Sub

First, let’s configure a Pub/Sub topic as it will be required in our set up of both the scheduler and serverless function. Topics can be configured here in your GCP console.

- As you can see all we have to configure is the topic name - I am using

example-topic

Cloud Scheduler

Next, we configure the Cloud Scheduler as our batch trigger. Head here and create your scheduler job.

- In my example, I am configuring the scheduler to run every 30 minutes but you can set any period desired

- Specify the topic we created in the previous step — I am using

example-topic - Our cloud function will not need anything except the trigger from the scheduler so the payload value is not important so you can put any value — I am using

run

Cloud Function

We are almost done, the last step is to create a Cloud Function that will be triggered when an event is triggered by the scheduler. You can find Cloud Function configuration here.

- Select Cloud Pub/Sub as the trigger type

- Select the topic created in the first step

- Proceed to the code configuration — I will be using the out of the box Node.js function. The function simply logs the contents of the Pub/Sub payload.

The function might take a minute to fully deploy.

Keep in mind that you can use any of the available programming languages in this step.

Testing

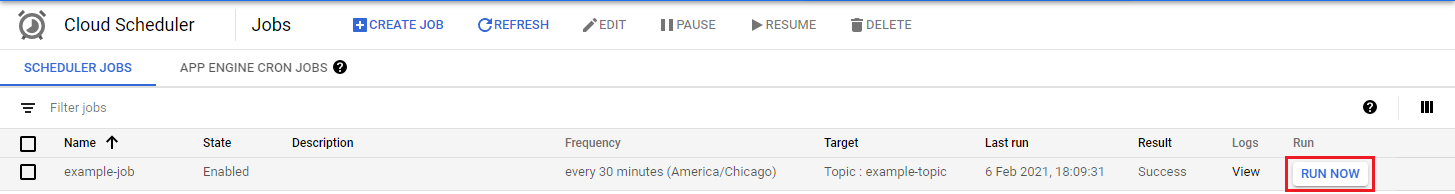

To test our configuration we need to head over the Cloud Scheduler list and manually trigger our scheduler using the RUN NOW option.

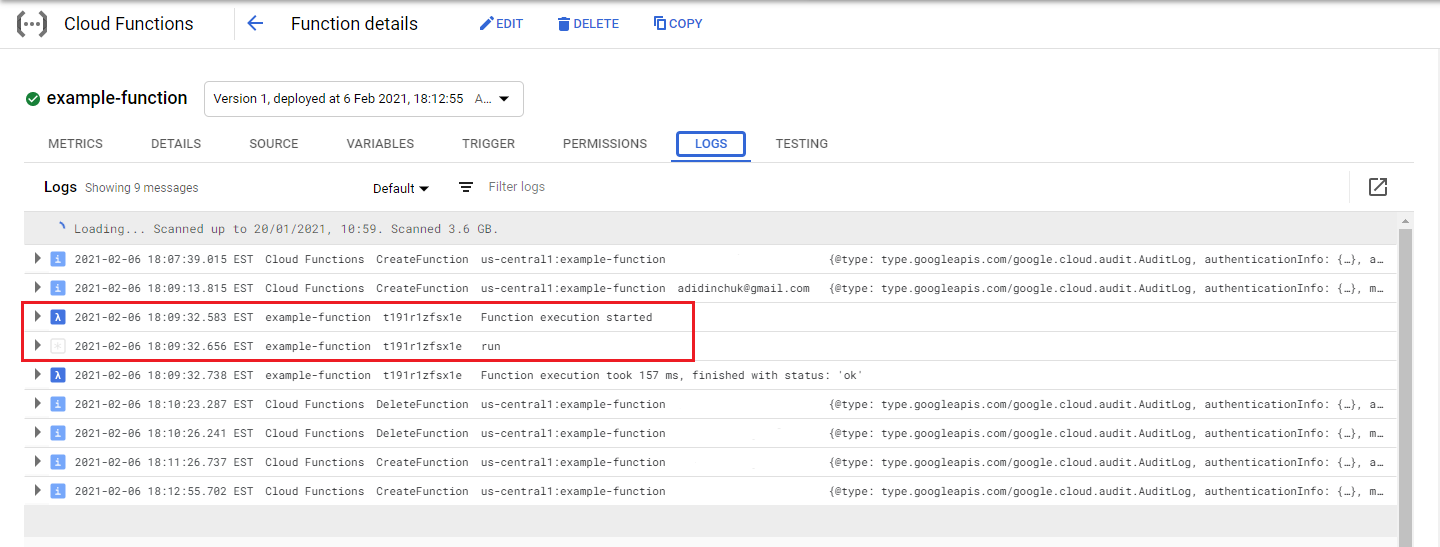

To make sure our function successfully triggered we can head over to our Cloud Function list, select the function you configured earlier and check out the Logs tab.

You should see log output indicating that your function ran and the payload messaged you configured for the Cloud Scheduler should also be displayed.

Success!

Conclusion

There you have it, in 5 minutes we configured a 100% free solution that you can use to run various types of batch jobs. If you ever find yourself in need of quickly setting up a highly decoupled solution for kicking off or running batch jobs you now have a quick and easy way to get it done using GCP.

Good luck and happy coding!